Interactive demo — real PRs, real findings

VCR reviews its own codebase on every pull request. Trace the triage flow, see what each layer catches, and follow findings back to the GitHub PR.

A multi-layered review process for enterprise teams shipping AI-generated code. Part of the Visdom AI-Native SDLC.

Part of Visdom · VirtusLab's AI-Native SDLC

Your CI says the code is fine. Your tests pass. But the AI wrote the tests too.

VCR is not a SaaS product and not a vendor service. It's a review process with an open-source reference implementation — opinionated patterns, six runnable layers, and a pipeline you run inside your own CI/CD, against your own LLM provider, behind your own network boundary.

VirtusLab deploys VCR as part of Visdom engagements: we embed with your platform engineers, configure the pipeline for your stack, tune the lenses to your conventions, and hand it over. Capability transfer from day one. Your team owns and operates the process. No ongoing SaaS dependency.

Evaluation methodology →Deployment model

Every PR passes through layers of increasing depth. Fast and cheap for trivial changes, thorough for risky ones. A LOW-risk PR gets feedback in under 2 minutes at ~$0.05.

Full architecture reference →Risk scoring, routing. Instant.

Linters, SAST, pattern checks. <30s.

LLM review with full context. <2min.

Multi-pass analysis, security, arch. <5min.

Each PR gets a risk level based on path classification, diff size, coverage delta, and module stability. Only MEDIUM+ risk triggers deep analysis.

See before/after scenarios →Config, docs, deps. Auto-approved or light scan.

Business logic. Standard LLM review with context.

Security-sensitive, cross-service. Multi-pass analysis.

Auth, payments, data migration. Full depth + human gate.

Each review is fed pre-indexed knowledge about the codebase: ownership, dependencies, commit history, conventions, and test reliability data.

Explore ViDIA context engine →Context sources

Patterns specific to AI-generated code that conventional CI and human reviewers typically miss. Each is a dedicated Review Lens in Layer 3.

See real examples →Tests that mirror implementation instead of verifying behavior.

Calls to methods or endpoints that don't exist in your codebase.

AI-generated code that ignores your team's established patterns.

Unnecessary Factory patterns, abstractions, and complexity.

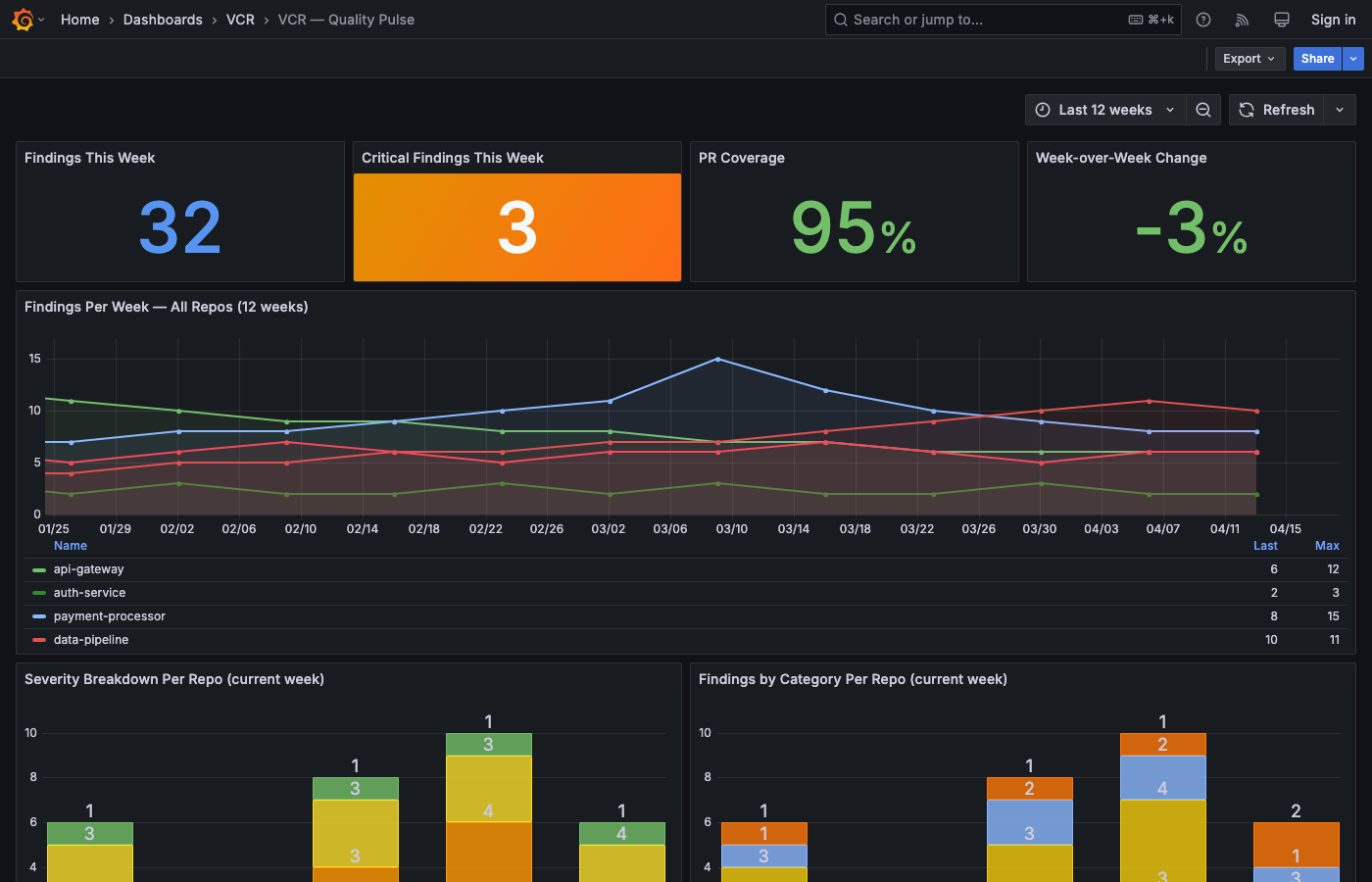

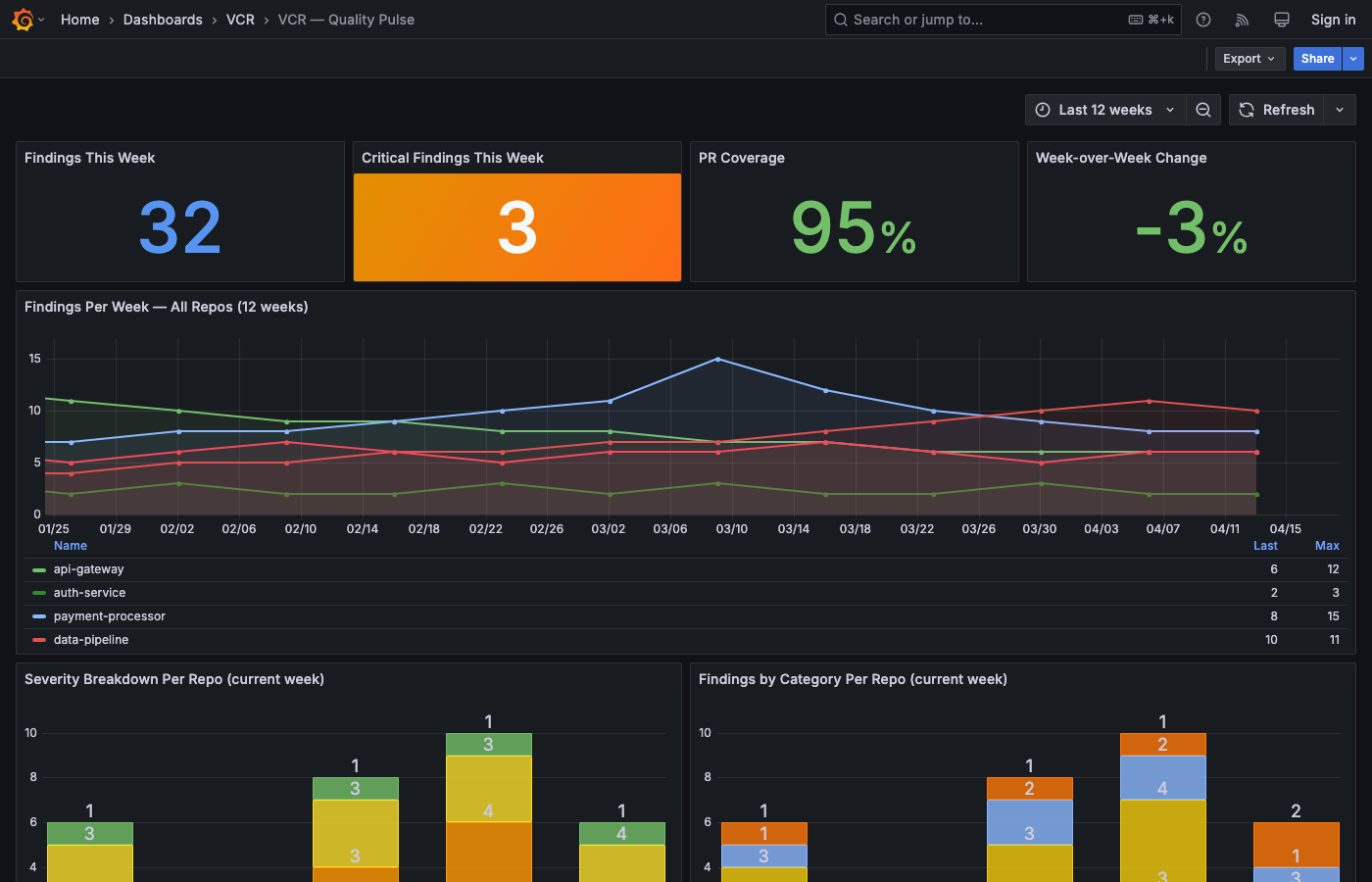

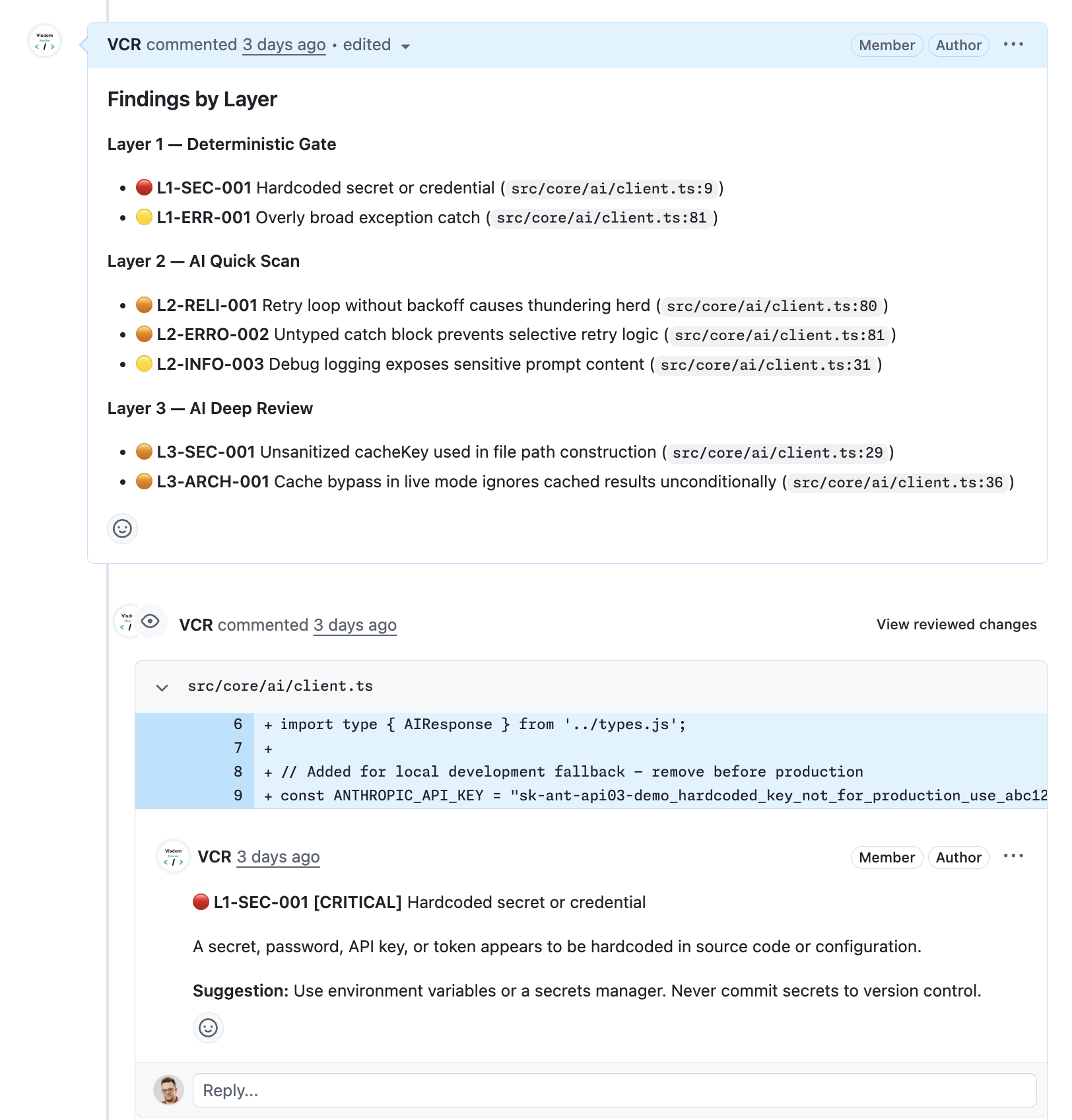

VCR reviews its own codebase on every pull request. Trace the triage flow, see what each layer catches, and follow findings back to the GitHub PR.

Findings grouped by layer, linked to the exact line. Every comment names the rule, the risk level, and a concrete fix — not a vague hint.

VCR is one of four components in Visdom, VirtusLab's AI-Native SDLC.

Pre-indexed code expertise, dependency graphs, PR history

Sub-2-min CI loops, caching, incremental builds, test impact analysis

Multi-layered AI code review. You are here.

GovernanceAudit trail, auto-evaluation, EU AI Act compliance

Read the thinking behind it: The AI-Native SDLC series

Reference material, architecture docs, and real-world scenarios.

Clone the repo and run the pipeline locally against a deliberately flawed PR (auth service with 12 passing tests and 94% coverage). The full 4-layer review executes end-to-end. No API key needed — cached responses included.

Setup & commands →Quick start

git clone https://github.com/VirtusLab/visdom-code-review

cd visdom-code-review/demo

npm install

# Narrated walkthrough (auto-paced)

npm run demo:narrate

# Interactive (press Enter to advance)

npm run demo:interactive

# Fast run (no narration)

npm run demo:localArchitecture, configuration, metrics framework, and reference implementations.